Altoura is spatial computing SaaS that enables rich virtual experiences for remote collaboration. We’re known for our ability to deliver immersive experiences with industry-best visual fidelity of spatial environments. This high fidelity dramatically improves the experiences we enable for front line workers, such as training and remote collaboration sessions. But creating photo-realistic 3D environments is a skill that requires more than a few technical chops.

Processing Needs for Spatial Computing

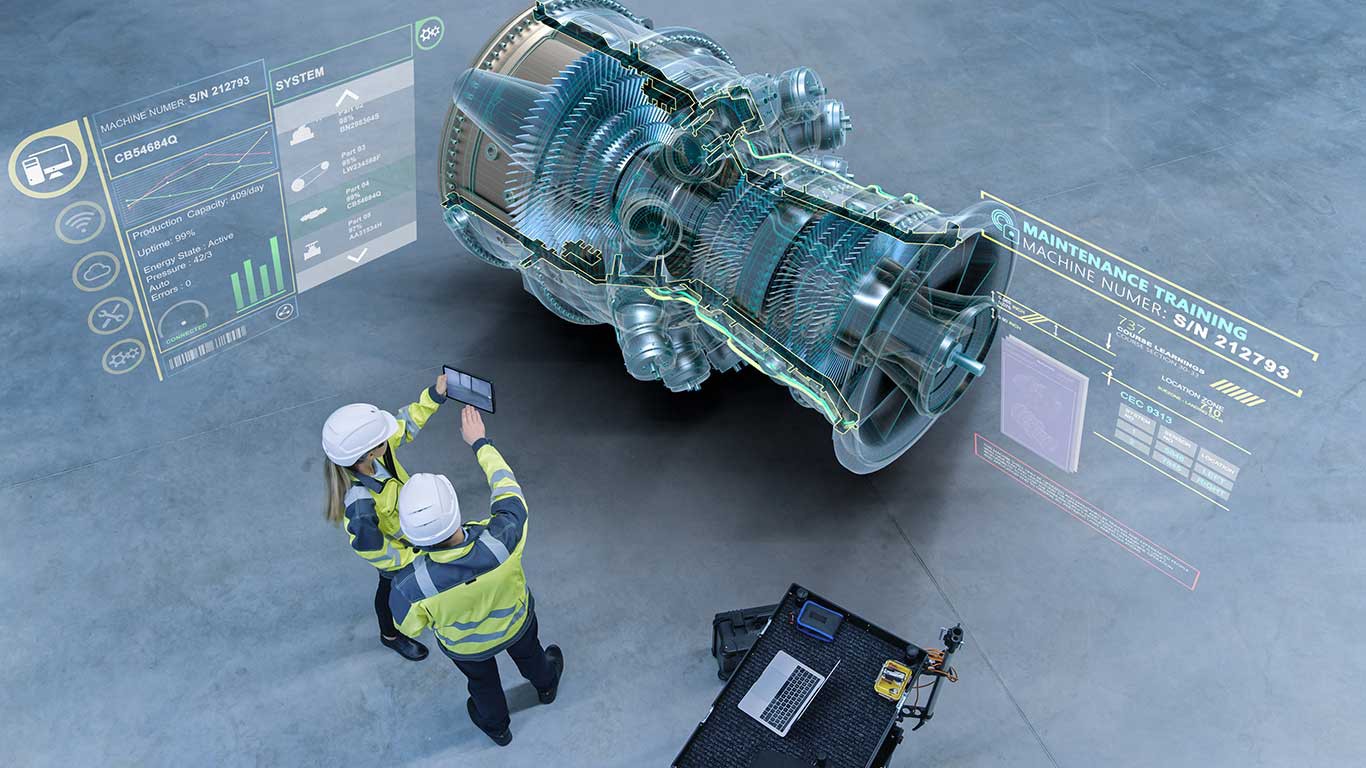

In creating these virtual 3D spaces and environments—sometimes on the scale of multiple building floors, rows of manufacturing equipment, or large swaths of land—our engineers spend lots of time to optimize the 3D models for accuracy and realism. Rendering complex graphics on a device-agnostic application like Altoura is a technical challenge because the hardware processing needs are significantly higher than those required by traditional applications used by information workers.

It might seem that a good tradeoff would be to reduce visual fidelity in exchange for improved processing speed of the application. However, sacrificing detail in a virtual 3D environment means giving up what makes spatial computing (AR/VR) experiences so powerful. The lack of visual fidelity (or any inaccuracy) reduces the efficacy of business scenarios like training, on-the job task guidance, simulations, communication, design reviews, and digital tours.

High Fidelity Matters

In fact, high fidelity 3D environments can be mission critical. For example, imagine an airline pilot trainee using a flight simulator with realistic graphics and cockpit controls. In this scenario, fidelity between the training scenario and real life is critical to ensure pilot readiness, and, ultimately, passenger safety.

The same logic can be applied to a host of scenarios across many industries. We work closely with manufacturers, retailers, healthcare giants, and other industry leaders to implement solutions that prove that productivity, efficiency, and quality are largely impacted by the degree of fidelity of the virtual remote collaboration experience to the real experience.

Device Computational Limits

Based on our extensive customer engagements, we’ve also learned that every device has different computational limits. As a result, it is tough to set a single polygon bar for 3D content. One way we leverage device capability differences is by packaging content differently based on device type. In dynamically loading and unloading content optimized for the end-user’s platform, Altoura adapts to your device whether it be a Microsoft HoloLens2, Oculus Quest, PC, iOS or Android device.

While this approach can remedy many device hardware limitations, it does not solve device processing limitations, but rather adjusts to it. To solve for potential limitations, we have started to build a flow for Azure Remote Rendering, a cloud service that enables Altoura to offload some of the rendering from your physical device to the cloud.

The Role of Cloud Services

By implementing Azure Remote Rendering with Altoura solutions, we do not have to sacrifice the visual accuracy of our 3D content. Remote rendering diverts computational power to high-end GPUs in the cloud. At a high level, the target device sends updates to our servers of one’s current and predicted positions in the virtual space, and in return the device displays the image of the model at the updated position and angle, but at 60 frames per second. Because this process happens so quickly, the user can see a highly detailed streamed view of a model that can be upwards of 18 million triangles. With a reliable network, the latency and visual fidelity of this approach is unmatched.

As spatial computing adoption grows—and hardware rapidly becomes more advanced—we have many more cutting-edge challenges to work on as Altoura grows. I look forward to sharing more technical details of our solution architecture in future blogs.

Want to learn more? Have feedback? I’d love to hear from you. Reach me at Alana@altoura.com

You May Also Like

How Altoura Drives Customer Success

October 05 2020

Introducing Altoura Single-Sign On (SSO)

January 31 2022

Author